Test Driven Development: why is it not so popular yet?

And why there is so much hate in my heart.

Introduction: tests are fundamental in production

I believe that every decent professional with some years of experience under the belt agrees that testing is fundamental for a good workflow and to produce good code. Not only testing manually, but in general, automatic tests like unit and integration tests are a must if we want to produce great code.

I totally agree with that, and I would be surprised if I meet an experienced programmer that believes it is useless in general. That programmer would have to have a solid argument on why it is useless in the particular case or project that person is working on, for me to agree. But it would have to be a divine revelation directly from God for me to agree that it is useless in general. What I want to say is that, in my experience, it is accepted that tests are needed in every project. At least unit tests and integration tests.

Being that so, it would seem that I am a TDD advocate and this post does not need to go longer. We are happy. Right?

What seems to be wrong with TDD

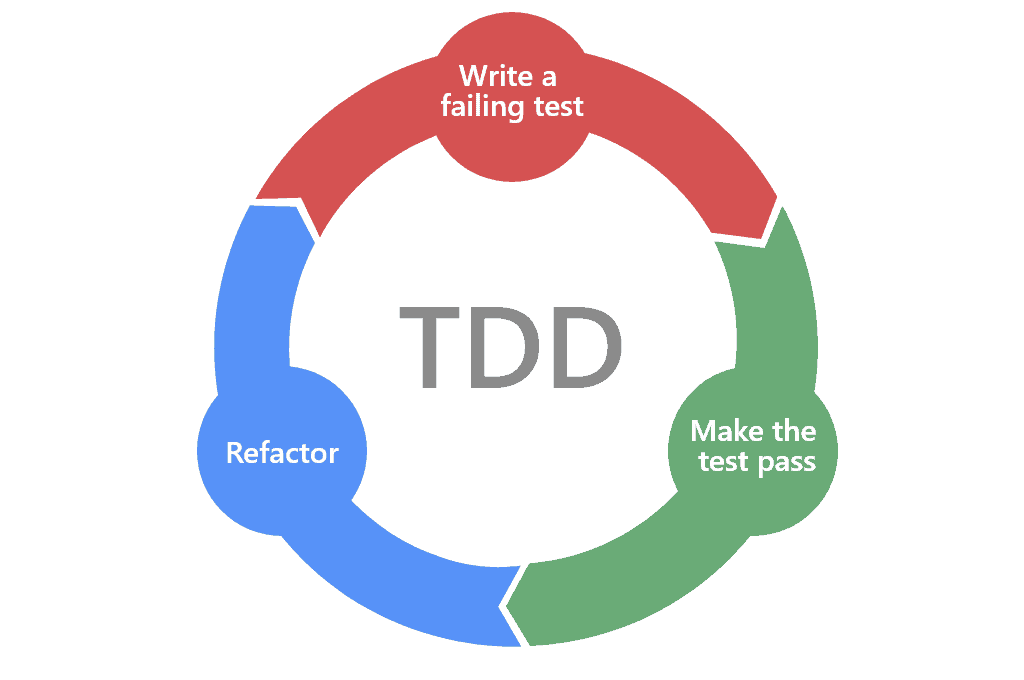

It was funny to me that they invented a name for something that seems to be the assumed for everyone I met and worked with. However, I discovered there are some things that make TDD a different approach. It claims that we should not write code first, but that we have a to write test, let them fail and then write the code to make it pass.

It is a good approach. Test would be the “document” that states the specification of the system and what conditions it has to check, as well as behaviors. That is ok in some scenarios, but I think that it won’t work in every scenario. If I am honest, it is weird to write test for code that doesn’t exist. Although I get it: the test checks the expected behavior of the code we need to write. As ideas are in the realm of ideas, it makes sense, but when you go to real situations, my experience is that you cannot always act like that.

Why? Because sometimes, in my own experience, you need to play a bit with the code and the problem you have to solve to figure out how you have to solve it.

When there is no code yet, meaning the project is fresh new, the first step is to think about the requirements, what the code has to do, to figure out the basic steps that need to be implemented. And on top of that, data structures to be used, modules, general structure of the code logic… I honestly think that in that situation it makes not much sense to try to write any test, no matter how basic they are. There is this moment in which you are investigating how the program is supposed to be. For me, that moment requires just focusing in the code itself, in the logic nd in the implementation of a solution. How would I write a unit test when I still don’t know the functions, classes or modules the code will have? Or worst, when I have no clear idea how the general logic will be split in the code. There is a discovery phase every time you start a new project in which you try to figure out how it should work.

If you write the wrong test, or the wrong signature of the function to test, you need to keep rewriting it. And what happens when you get the logic or the specification wrong? In an ideal world, that would never happen, but in the real world that is pretty much always the case, let alone when you are working in agile-like organizations, where requirements change during the development process. If you have the wrong specifications, because the problem is new, you will have broken tests that need rewriting. That means you have to maintain 2 different sets of code, that sometimes differ in the assumptions on how the logic should work.

I guess what I am trying to say is that sometimes it feels to me that starting with tests would delay you and potentially confuse you about what to do. There is also the point that if there are tests written, developers tend to consider the tests as a source of truth for the code. And if there is a refactor at the beginning of the project, you have double the amount of code to be refactored.

I can say though, that my doubts about test driven development is about the first stages of a project when the ideas are still fuzzy at best. And the fact that it apparently declares a “good way” and a “bad way” to design and write tests and code.

The cult

As I wrote in a post regarding CleanCode, what I noticed is the fact that there are people defending the methodology as if it was a set of dogmas of a religion. Besides that, I found developers that told me “I cannot write code without having first tests that tell me what the code has to do”. That is weird… I mean, what they are saying is that the first thing they need to think about is what logic they have to implement before writing the first line of code. But that is something everyone does! It is the basic step to solve any problem, coding problem, math problem… you first need to focus on the problem and understand it to start solving it!

But it seems that these individuals I talk about -and I am not necessarily talking about the above conversation, but real people I met at work- follow some ceremonial steps to do the same thing: understand the logic of the problem. And if they don’t do so by writing tests, they seem incapable of thinking about that and writing code. That is, if there is no test suite configured in the project, they seem to imply they could not start working on that code until they create the test project, compilation config, test suite, and test code. I think that is a limiting situation for a developer.

It is also important to notice something: that people being in the “cult” and taking it as a dogma, does not means TDD is wrong. It may be the people not being flexible enough to understand that it is another tool in the toolbox of developers.

I became suspicious of any kind of cult-like approach to write code, as it indicates a potential tendency to follow strict rules without thinking if that is the right approach for the situation and the problem at hand.

I follow some interesting people in X (previously Twitter) that are strong advocates of TDD, like the one in the X thread shown above. And they tend to share some absolute statements about TDD as if it was the only and one way to develop code. I got involved in some conversations, mainly asking questions about the statements. And in that experience I noticed that no matter what you answer, what situation you mention where it seems TDD is not a very good approach to start with… they always find something else that is wrong. It is never the TDD approach that is not suited to that particular case. It is something else or someone else to blame, as TDD is perfect. That is a cult-like thinking to me.

Conclusion

I think TDD is a name for just a good practice, or better yet, it is another name to say professional development. And as SOLID principles and Clean Code, it was a time when those practices were not known (think about the early days of software) and they became famous and fundamental for software development. But nowadays they are the usual approach to development for most developers with some experience. Or at least, they are the principles and approaches to code that every experienced developer has in its mind when they think about software development. Another thing is the companies, that may have a bad approach to it. But that is another story.