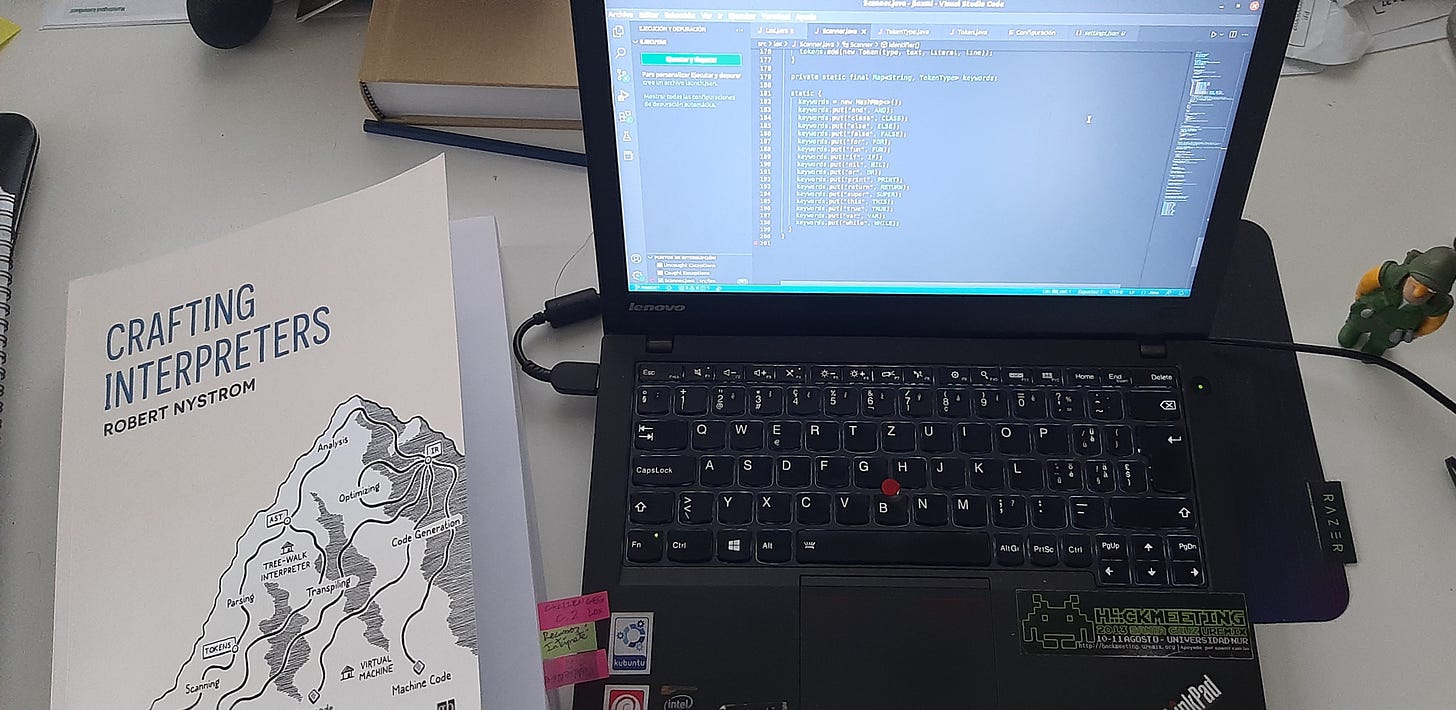

I mentioned somewhere that I am reading the book Crafting Interpreters by Robert Nystrom. The book is an introduction to how a compiler or interpreter works by building a small interpreter in Java first, and in C later for a toy language called Lox.

I never learnt much about compilers, as I didn’t study CS but physics. So in my career as a software developer I never had the need to learn the basics. That was an obscure area of programming, as I tried to imagine how a piece of code could be properly converted into something executable. But that was always an interesting topic for me, waiting for a time when I could go deep into it. And here I am, getting into it. And for the moment, I am loving it!

I just finished chapter 4, which is the first one where you write code, as the previous ones are introduction to the different parts a compiler or interpreter should have. Chapter 4 is where you start building, first of all, the scanner that will read the code and tokenize it so it can be parsed later. Very interesting thing, as it builds the knowledge one step at a time. I opened a repository where I will be adding the code and different branches with some challenges and exercises the author proposes to the reader, you can check it here.

Lexical grammars: regular and not regular

Essentially, the scanner will just check the grammar and try to identify tokens, that means, characters or group of characters that have special meaning for the language. That is the usual operators (+, -, /, (), {}…), numbers and identifiers that are the keywords of the language (like “class”, “for”, etc).

What is more interesting is the challenges the author proposes after the chapter is done. The first one is finding out why there are some languages that have not regular lexical grammars. More exactly, what is a regular lexical grammar? Obviously it is a grammar that is regular, but what does it means?

Well, I decided to answer that and went into a rabbit hole! The concept is rooted in formal language theory, Chomsky hierarchy and other obscure matters. But what the heck, if I was able to graduate in theoretical physics, I should be able to grasp the basic ideas, right?

As usual in this forays intro unknown territories, the key is to try to get the very basic pieces of information, and build from there. That means, reading without stopping in every concept that is not understood, in the hopes of acquiring a general view of the topic.

So I started with Chomsky hierarchy. I didn’t understand most of it, but one key concept: a regular grammar is a type-3 grammar in this hierarchy and that means a finite-state automaton can accept it. In essence, it means that the language can be scanned using recursion and applying the same rules over and over. That is, it is context free and the rules applied in step n has no knowledge on what happened on n-1, and will not affect the process on n+1.

Note: the condition of context free is applied to tokens of the language, not to each character that comprises the source code we are trying to scan. This is something that gave me a bit of a headache, as if you apply this idea to the raw text code, no language could be context free, but a simple calculator (if I am not wrong).

Not-regular grammars would be anything else. Obviously there will be a lot of differences involved, but as I am just working with a pretty regular grammar as of now, I will not go deeper for the moment. But…

Why Python or Haskell don’t have regular grammars

As I understood, the reason for this is, in the case of Python, the indentation sensitivity. As the code written in it depends on the indentation to determine the scope, the grammar cannot be regular in this case, as it depends strongly not just in the indentation a line has, but on the indentation of the preceding lines. So a simple finite-state automaton cannot deal with it properly. You need to keep a structure of the lines you read before to really process the line you are working on the moment, so there should be some stack-ish structure to keep track of that. And the reason for this is that each lexical element can have different meanings depending on some context, which is something that does not occur when you are using a regular grammar.

And for Haskell is the same. Both languages use indentation to structure logic of the code, so using a regular, context-free, grammar is not possible. Because you need the context, the indentation in this case, of the line you are scanning and of the previous ones in order to make sense of the code.

I am sure there are other reasons for them to not have a regular grammar regarding the language definition, but for now this is where I am ok with the answer and move on.

What is next

There is only a question that picks my interest at this point, and hopefully I will be able to answer it soon. It relates to languages like C, or any language that uses {} and () to structure the logic of the code. Indentation in those languages is a matter of readability, so the questions I have, and may answer in the future, is:

C-like languages have a regular grammar or not?

Is it only the indentation sensitivity what makes Python not regular?

I suspect that the answer is NO to both questions. But that is it for today!

Bonus

In the process of writing the lexer, which is also called scanner, the book decides to interpret every number as a double precision one, and it decides to only accept pure decimal numbers in the form of 0.xxx instead of accepting also numbers without a leading 0. I implemented that option in a branch in the repository.

It is not very difficult to understand as it only contains the changes for this. However, I didn’t test it fully yet to be sure it works in every possible corner case. So if anyone find a problem, or a case where it fails or that can explain why what I did is wrong, is more than welcome to comment it here, in the repository or in Twitter (at @PunteroCrudo. And yeah, I know it is a different name than the blog user and the blog itself… I am working on re-branding but it will take a bit). I am here to learn, so please let me know!